I’ve been playing Audiosurf recently, and it struck me that buried deep within my thesis was a nice little bit of theorising about the game. So I've chosen to reprint it here, slightly edited, for the convenience of anyone who can’t be arsed to wade through my multiple thousands-of-words thesis and pick out the good bits (probably most people).

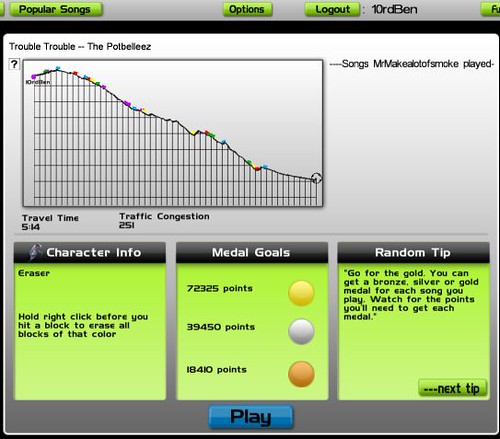

I’ve been playing Audiosurf recently, and it struck me that buried deep within my thesis was a nice little bit of theorising about the game. So I've chosen to reprint it here, slightly edited, for the convenience of anyone who can’t be arsed to wade through my multiple thousands-of-words thesis and pick out the good bits (probably most people).____________________________________________________________________ Audiosurf was the work of primarily by one person, Dylan Fitterer, and was released on the Steam digital distribution platform in February 2008. Audiosurf requires music to play – it takes your music collection, and creates a 3D track based upon features of the music which is then navigated by the player who, depending on the game-mode, collects coloured blocks that visually correspond to the music. The game ostensibly provides a way to ‘ride your music’ as the game’s tag-line suggests[1] - a feat of musical gameplay that is operating on a rather different level to a game like Guitar Hero. It’s also a great step towards overcoming some of the widely acknowledged problems with games like Guitar Hero - many critics have noted that the strength of a music game is largely subject to how good its track listings are[2]. Alec Meer says,…we were all playing Guitar Hero and wishing we could stick our favourite music into it. Audiosurf says “fuck it, why not?” and provides the scaffolding of a game around it[3]

Audiosurf’s particular implementation of representing and performing music in a game does however come with a number of its own disadvantages. Firstly, the way the three dimensional track is generated by the program is fixed and determined by a set algorithm[4]. In an interview with Ars Technica, the developer Dylan Fitterer commented on the way that the algorithm turns the song into a three dimensional track, saying;

…when the music is at its most intense, that's when you're on a really steep downward slope, like you're flying down a rollercoaster in a tunnel. When the music is calmer, that's when you're chugging your way up the hill, watching that peak in the distance you're going to reach.[5]

The experience of playing the game itself is where I personally find the major innovations of Audiosurf as well as its major problems. When surfing a song the game’s analysis algorithm has pre-determined the majority of the course’s parameters from the musical elements contained within the recording. Some aspects of the course are determined from relatively transparent musical parameters – the track’s length corresponds directly to the length of the song and the contours of the course are derived from reasonably straightforward aspects such as volume and dynamics. In music with a strong steady beat, the track will often appear to undulate along beneath the player’s ship character in time with the rhythm of the song. The comprehensible translation of the music into visuals, or lack thereof, is where I encounter the main problem of Audiosurf.

In the examples outlined above, the relationship between music and the visuals (the track environment) is clear and direct, making sense to the player and allowing for a pleasurable and organic merging of knowledge of the song with knowledge of the corresponding Audiosurf track. This is a significant aspect of the appeal of the game as much community discussion goes on about the suitability of tracks for surfing[6]. Indeed the process works effectively on the macro structural scale, however a core component of Audiosurf is a ‘match 3’ type block collection game, where the block placement – called ‘traffic’ by the game – is generated from the rather more musically ambiguous parameter of “volume spikes”. The developer, Dylan Fitterer, describes the process saying

…whenever there's a spike in the music, the intensity of that spike determines the block's color. So the most distinct spikes, like a snare drum, that would tend to be a red block, a really hot block. If something is a little more subtle, like a quiet high hat, that would be a purple block, which is worth less points.[7]

This kind of relationship between music and visuals or environment becomes, musically at least, increasingly murky on this micro level as a sheer ‘spike’ in volume is no guarantee that a listener would make the corresponding connection to what they are hearing. Indeed the issue of what a listener actually perceives about a song when listening to it is much, much more complicated. Albert S. Bregman, author of the comprehensive text ‘Auditory Scene Analysis: The perceptual organisation of sound’ coined the term “stream” for what he identified as an audible cognitive process which was lacking adequate terminology. Bregman’s research noted a significant distinction between the cognitive process of the grouping of sounds that ‘go together’[8] from what might be distinguished as pure ‘sounds’. He notes that, ‘A series of footsteps, for instance, can form a single experienced event, despite the fact that each footstep is a separate sound.’ He also makes a musical comparison, saying that,

A soprano singing with a piano accompaniment is also heard as a coherent happening, despite being composed of distinct sounds (notes). Furthermore, the singer and piano together form a perceptual entity – the “performance” – that is distinct from other sounds that are occurring.[9]

Kieron Gillen writing for Rock, Paper, Shotgun says that

The problem with Audiosurf is that the concentration you take to really make the block game work is entirely the opposite of what you need to do to feel the music. The two parts of the game can tug at each other a little...On one hand, a zone game. On the other, a high-speed sorting puzzle.[10]

What I believe that Gillen has identified here is the inherent disjunction between what the musical listener focuses on when listening to the song, and what the game makes the player focus on. I suggest that this phenomenon is somewhat analogous to Ian Bogost’s term ‘simulation fever’. The concentration Gillen identifies as being necessary for successful play means that the player is acutely aware of block placement, largely determined by the volume spikes mentioned earlier.

I would argue that simply focussing on volume spikes is not adequately representative of the music to withstand the scrutiny that a player applies to it. I propose that, in a situation of high concentration on music, a more complex system is needed, one which addresses the issue of how a listener perceives a song. Admittedly, this is a daunting prospect and one inevitably encounters certain apparently insurmountable barriers to rendering onscreen what any one particular person is most likely to concentrate on within a song at any one time, needing as it would to take into account personal differences and background as well as individual musical training. However, the fact remains that this process is undertaken by humans themselves leads me to believe that a more accurate model is possible. When listening we can (and do) lock onto a number of particular elements of a song – the melody, a catchy lead rhythm or hook – and this is not always represented visually on screen. While Audiosurf often wonderfully represents the underlying kick-drum rhythm, especially if it is prominent, it will rarely pick up and single out an element like the aforementioned melody or hook unless it stands out in a particular way – namely through sheer volume.

Guitar Hero, in contrast, sidesteps some of these problems through both its position as a guitar game (with the player’s concentration largely limited to being focussed on the guitar) and by having a human pre-define the on screen actions the player has to undertake to ‘perform’ the song. However it does not yet allow for any meaningful input of a players own music library, and for that I am continually thankful for Audiosurf’s existence – imperfect though it may be.

[1] Wikipedia contributors, "Audiosurf," Wikipedia, The Free Encyclopedia, http://en.wikipedia.org/w/index.php?title=Audiosurf&oldid=241996378, accessed October 7, 2008.

[2] See for example, Mitch Krpata, ‘Rock Band 2: Why now?’, Insult Swordfighting, http://insultswordfighting.blogspot.com/2008/07/rock-band-2-why-now.html, accessed October 7th, 2008.

[3] Alec Meer in ‘The RPS Verdict: Audiosurf’, Rock, Paper, Shotgun, http://www.rockpapershotgun.com/2008/03/03/the-rps-verdict-audiosurf/, accessed March 3, 2008.

[4] Thomas Wilburn, ‘Catching Waveforms: Audiosurf Creator Dylan Fitterer speaks’, Ars Technica, http://arstechnica.com/journals/thumbs.ars/2008/03/11/catching-waveforms-audiosurf-creator-dylan-speaks, accessed

[5] Dylan Fitterer in Thomas Wilburn, ‘Catching Waveforms: Audiosurf Creator Dylan Fitterer speaks’.

[6] See the comments section of any Rock, Paper, Shotgun Post tagged ‘Audiosurf’ – every single one involves readers suggesting songs that others should try: http://www.rockpapershotgun.com/tag/audiosurf/

[7] Dylan Fitterer in Wilburn, ‘Catching Waveforms: Audiosurf Creator Dylan Fitterer speaks’.

[8] Albert S Bregman, Auditory Scene Analysis : The Perceptual Organization of Sound, 2nd MIT Press paperback ed.,

[9] Ibid, p.10

[10] Kieron Gillen in ‘‘The RPS Verdict: Audiosurf’, Rock, Paper, Shotgun, http://www.rockpapershotgun.com/2008/03/03/the-rps-verdict-audiosurf/, accessed March 3, 2008.

5 comments:

Aw, crap...footnotes??? We're all done for if you establish that kind of standard in blogs.

I agree about needing a better standard than volume spike. Maybe a genre button could let the game know that this is a mellow song? I typically play it on the easier modes because I just like to relax and engage with my music.

You know, I've considered a genre button before and it's totally do-able. You'd be able to see the kind of track it generates and fiddle around with some parameters to get it to fit with your idea better.... but... then there'd be no standard across players, and so there could be no comparison scoring, competition, etc.

I'll admit it, having world #1's for 3/4 of an album by Screamo band Underoath and nearly 2 whole albums worth for Aussie band The Herd is a pretty cool feeling. =P

Another alternative would be to have human reviewers to listen to the tracks and assign something but that's a bit out of reach logisitically for an indie game like Audiosurf. And could you imagine what they'd do when they got to the stuff that was genre defying (Thinking Mr. Bungle, esp.), that would be something to see!

"It's lounge... no wait, now it's metal... no, now it's vocal noises and a cacophony of movie sounds... no, it's changed again..."

(Oh and footnotes are only in there because it comes almost straight outta my thesis.)

Very valid concerns, but I'm sure everyone noticed that - the real question is: how to overcome this problem?

BTW, I found I can get a much better "feel" for the song if I play the Mono character (all blocks are the same, but some need to be avoided). Yes, this simplifies the game, but it allows me to forget about the underlying game itself and concentrate more on the music. It's definitely the superior playmode, from a music-enjoyment point of view.

VPeric - You're right, that is the million dollar question, and I suspect it's going to be quite a while before anyone comes close to answering it satisfactorily.

FWIW I actually enjoy the eraser mode more than mono because, especially with the kinds of music I listen to in AS (mainly electro/dance or screamo) eraser both gets most points and can provide rather serendipitous representations of the music.

Particularly, because a lot of dance music is mixed to be really, really compressed and LOUD, at often times a lead instrument or vocal line will get picked up particularly well and rendered as a bunch of yellow blocks all in rhythm with that rhytth. It just seems to sometimes work a bit better. A lot of the time...well, you seem to have played it you know what it's like.

I also quite like Mono on occasion, but in terms of getting a feel for the music, I don't actually find it provides enough of that 'synaesthesia' feel that I want.

But that's just me, go with whatever works.

I enjoyed playing Audiosurf to the various video game soundtracks I have.

I definitely remember there being certain songs I could play over and over again, while still having fun. Others... not so much. They just didn't have any grip.

Now that I think about it, I can't remember playing anything than "Still Alive" that had lyrics. Guess I'll do that when I get home, tonight.

Post a Comment